In-context learning (ICL) lets you teach models how to complete tasks by showing examples rather than explaining in detail. This is often more reliable than complex instructions. It is also known as few shot prompting.Documentation Index

Fetch the complete documentation index at: https://docs.opper.ai/llms.txt

Use this file to discover all available pages before exploring further.

How It Works

- You provide examples - input/output pairs showing ideal completions

- At run time Opper retrieves relevant ones - semantically similar to your current input

- Model sees examples in context - and follows the pattern

Quick Start: Inline Examples

The simplest approach is passing examples directly in your call:- Prototyping and testing

- Small, fixed example sets

- Edge cases you always want included

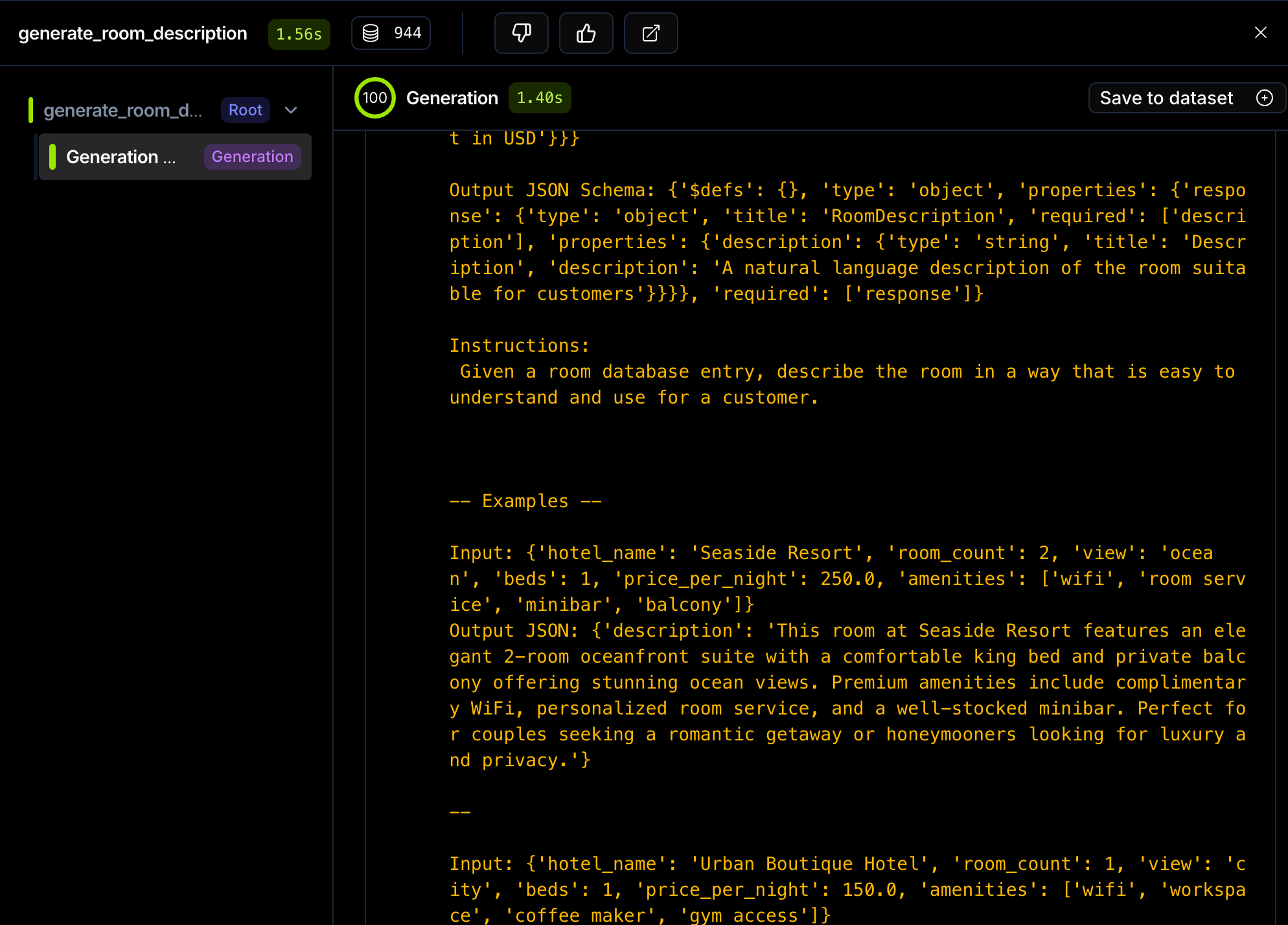

Managed Examples with Datasets

For production use, store examples in a dataset attached to a function. Opper automatically retrieves the most relevant examples for each call.When you use

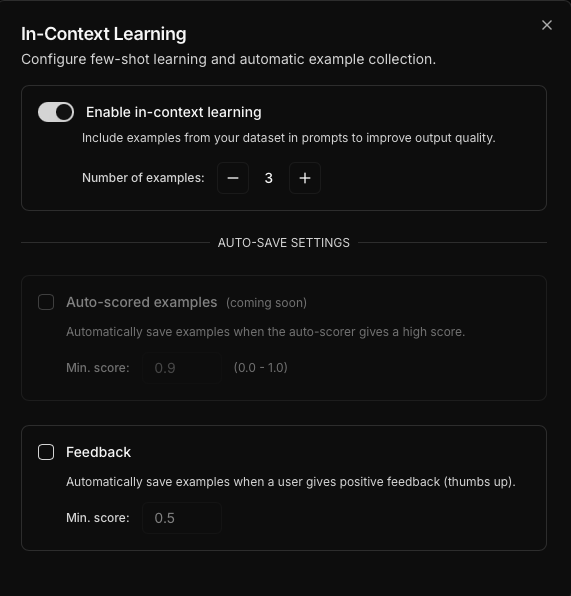

/call, Opper automatically creates a function configured to use 3 examples by default. So you need to start populating your dataset. You can view and adjust this configuration in the platform.

Step 1: Populate the Dataset

You have two main options to add examples:Option A: Automatic via Feedback (Recommended)

The most common approach is to save good outputs automatically through the feedback endpoint. When you make a call, you get aspan_id back. If the output is good, submit positive feedback and it will be saved to the dataset.

By default, all positive feedback (score=1.0) is automatically saved to the function’s dataset.

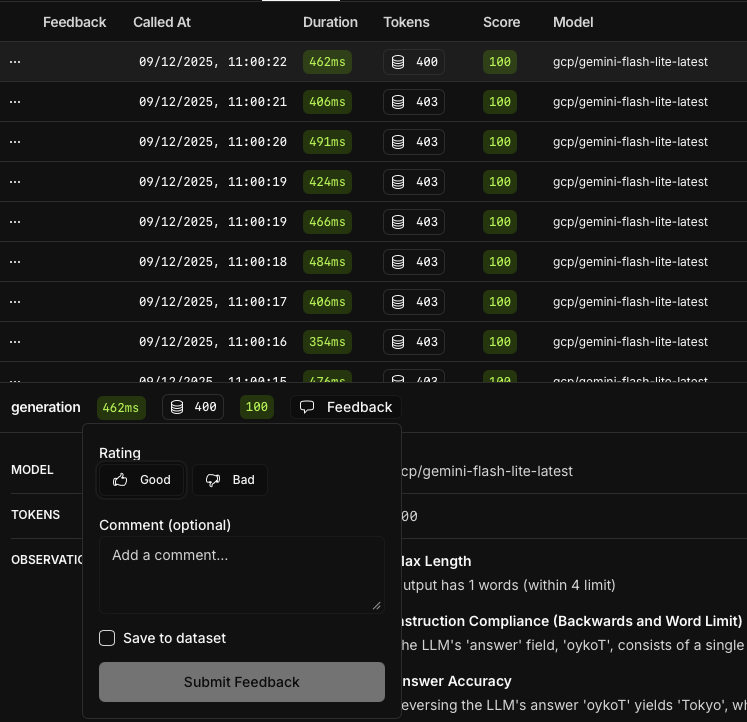

Option B: Manual Curation in Platform

Best for subject matter experts who want to review and curate examples directly.- Go to Traces in the Opper platform

- Find a successful completion

- Click the feedback button to rate the output

- Positive feedback automatically saves to the dataset

Option C: Batch Upload from Code

Best for seeding initial examples or migrations from existing datasets.Step 2: Call the Function

Now calls automatically include relevant examples from the dataset:

Building a Feedback Loop

A common pattern is to automatically collect feedback from your users and let good outputs improve future outputs. How it works:- You make a call - your application calls an Opper function

- User provides feedback - rate responses with thumbs up/down

- Opper learns from feedback - positive feedback auto-saves to the dataset

- Future calls improve - new examples guide better outputs

Automated Evaluation with Observer

For high-volume applications, you can automate the feedback process entirely using the Observer. Instead of waiting for human feedback, The Observer automatically evaluates outputs and saves high-quality examples to your dataset. How it works:- The Observer watches your function - monitors all completions

- Auto-scores outputs - evaluates quality based on your criteria

- Captures good examples - high-scoring outputs auto-save to dataset

- Continuous improvement - your function improves without manual intervention